1. 整体架构

浏览器 / 客户端A

|

| (信令: ws / http / mqtt / other...)

|

信令服务器

|

|

|

客户端B(c++ / 浏览器 / 手机)关键点:

信令服务器不参与传输, 只负责交换协商信息真正的数据传输流程:

A <------ P2P ------> B2. 连接过程

整个连接过程可以拆成 6个阶段:

1. 信令建立

2. SDP 协商

3. ICE candidate 收集

4. ICE 连接检测

5. DTLS 安全握手

6. 媒体/DataChannel建立3. 完整执行流程

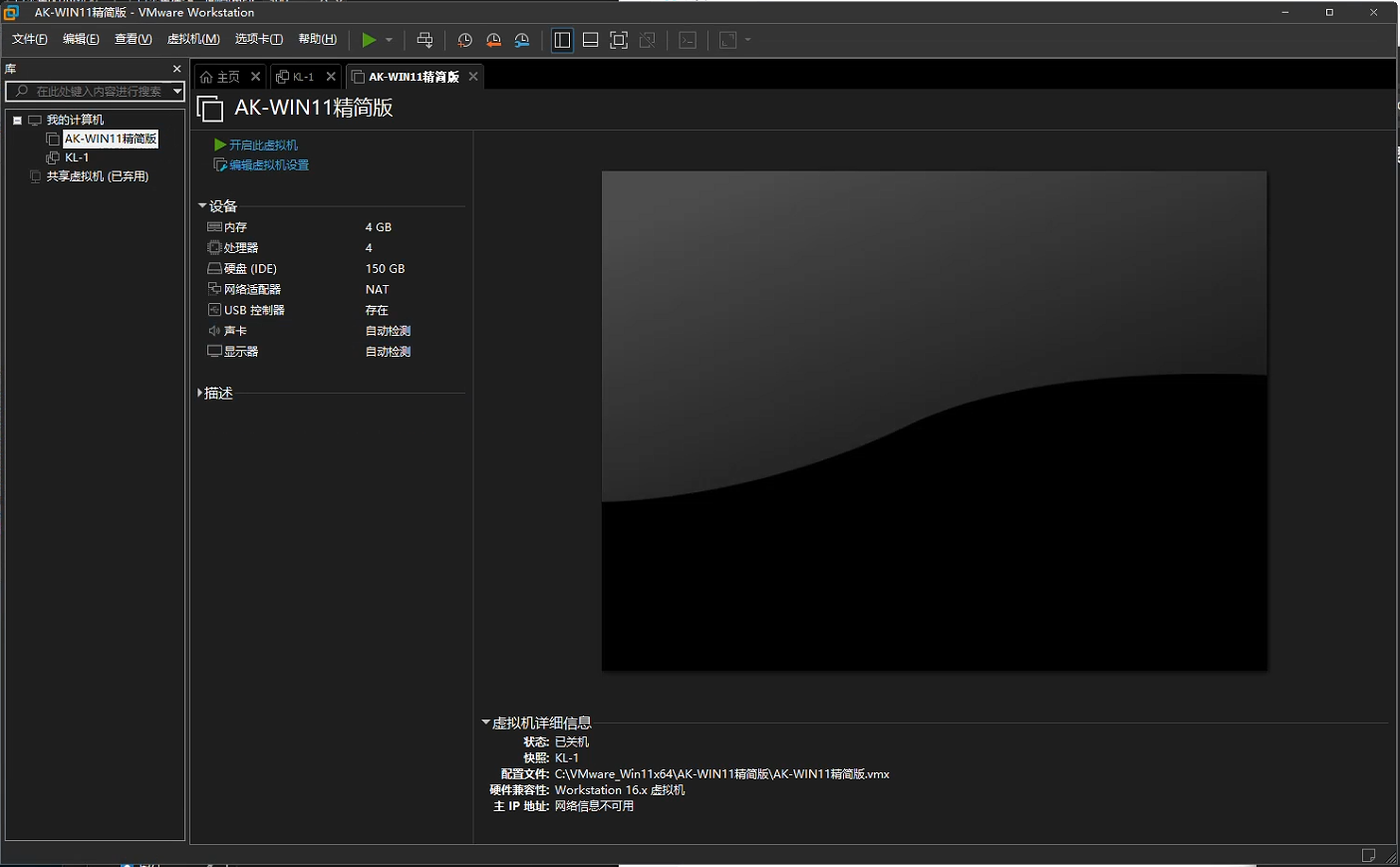

本文的环境为web端显示视频, c++端推送视频流至网页.

1 创建 PeerConnection

浏览器:

const pc = new RTCPeerConnection(config)C++:

pc = std::make_shared<rtc::PeerConnection>(config);这一步只是:

创建一个 WebRTC 连接对象此时还没有任何网络连接。

2. 建立信令通道

浏览器 / C++:

WebSocket

HTTP

MQTT

Socket以上方式均可. 本文用的是websocket

3 Offer 生成(发起方)

发起连接的一方执行:

const offer = await pc.createOffer()

await pc.setLocalDescription(offer)此时 WebRTC 会生成:

SDP offer里面包含:

支持的编码

媒体方向

ICE信息

DTLS信息

SSRC

媒体类型然后发送:

offer -> signaling server -> 对端4. 接收 Offer(应答方)

对端收到:

{

"type":"offer",

"sdp":"..."

}执行:

pc->setRemoteDescription(...)这一步做了很多事情:

解析 SDP

创建 transceiver

准备媒体轨道

准备 ICE然后生成 answer:

pc->setLocalDescription(Answer)5. Answer 返回

C++ 发送:

{

"type":"answer",

"sdp":"..."

}浏览器收到后:

pc.setRemoteDescription(answer)到这里:

SDP协商完成但连接还没有建立.

6. ICE candidate 收集

两端都会开始收集地址:

本地网卡

VPN

公网映射

TURN服务器典型 candidate:

host

srflx

relay浏览器触发:

pc.onicecandidateC++触发:

pc->onLocalCandidate每生成一个 candidate 就发送给对方.

7. 交换 candidate

双方不断交换:

candidate并调用:

addIceCandidate此时双方都会得到:

一组远端地址

一组本地地址8. ICE 连接检测(最关键)

ICE 开始:

尝试所有组合例如:

A(host) -> B(host)

A(host) -> B(srflx)

A(srflx) -> B(srflx)

A(relay) -> B(relay)每种组合都会:

发送 STUN Binding Request如果成功:

candidate pair 成功状态变化:

checking -> connected -> completed9. ICE 成功后建立 DTLS

找到可用路径后:

开始 DTLS 握手DTLS 类似:

TLS over UDP作用:

交换密钥

建立加密通道日志会看到:

DTLS handshake finished10. 建立 SRTP / SCTP

DTLS 成功后会派生密钥:

SRTP key两种数据路径:

媒体

SRTP用于:

音频

视频数据通道

SCTP用于:

DataChannel日志里会看到:

SCTP connected11. PeerConnection connected

最终状态:

PeerConnection state = connected说明:

P2P连接建立成功12. 媒体 / DataChannel 开始工作

之后:

DataChannel

dc.onopen

dc.onmessage视频

ontrack

sendFrame

RTP4. 用一张流程图总结

创建 PeerConnection

│

│

建立信令通道

│

│

createOffer

│

│

send offer

│

│

setRemoteDescription

│

│

createAnswer

│

│

send answer

│

│

双方开始 ICE candidate 收集

│

│

交换 candidate

│

│

ICE connectivity check

│

│

ICE connected

│

│

DTLS handshake

│

│

SRTP / SCTP 建立

│

│

PeerConnection connected

│

│

媒体 / DataChannel 开始传输5. WebRTC 最核心的四个 API

基本所有程序都围绕这四个:

setLocalDescription

setRemoteDescription

addIceCandidate

onIceCandidate6. 执行流程一句话总结

WebRTC 建立连接本质就是:

1 交换 SDP(能力协商)

2 交换 ICE candidate(地址交换)

3 ICE 找到可通信路径

4 DTLS 建立安全连接

5 SRTP/SCTP 开始传输7. 代码示例

cmakelists.txt

cmake_minimum_required(VERSION 3.31)

project(StudioControlPro)

set(CMAKE_CXX_STANDARD 20)

set(CMAKE_CXX_STANDARD_REQUIRED ON)

set(ENV{http_proxy} "http://127.0.0.1:7890")

set(ENV{https_proxy} "http://127.0.0.1:7890")

set(BUILD_SHARED_LIBS OFF)

set(FIND_LIBRARY_USE_STATIC_LIBS ON)

if (MSVC)

set(CMAKE_MSVC_RUNTIME_LIBRARY "MultiThreaded$<$<CONFIG:Debug>:Debug>")

add_compile_options(/utf-8)

add_compile_options("$<$<CONFIG:Release>:/Zi>")

add_link_options("$<$<CONFIG:Release>:/DEBUG>")

add_link_options("$<$<CONFIG:Release>:/OPT:REF>")

add_link_options("$<$<CONFIG:Release>:/OPT:ICF>")

endif()

find_package(CURL CONFIG REQUIRED)

find_package(spdlog CONFIG REQUIRED)

find_package(nlohmann_json CONFIG REQUIRED)

find_package(magic_enum CONFIG REQUIRED)

include(FetchContent)

# Configuration for libdatachannel

set(NO_MEDIA OFF CACHE BOOL "Enable media support" FORCE)

set(NO_WEBSOCKET OFF CACHE BOOL "Enable WebSocket support" FORCE)

set(NO_DTLS OFF CACHE BOOL "Enable DTLS support" FORCE)

set(NO_SRTP OFF CACHE BOOL "Enable SRTP support" FORCE)

set(NO_SCTP OFF CACHE BOOL "Enable SCTP support" FORCE)

set(NO_EXAMPLES ON CACHE BOOL "Disable examples" FORCE)

set(NO_TESTS ON CACHE BOOL "Disable tests" FORCE)

# Prefer bundled dependencies to avoid vcpkg mismatch issues during FetchContent

set(USE_SYSTEM_SRTP OFF CACHE BOOL "Use bundled libsrtp" FORCE)

set(USE_SYSTEM_JUICE OFF CACHE BOOL "Use bundled libjuice" FORCE)

set(USE_SYSTEM_SCTP OFF CACHE BOOL "Use bundled usrsctp" FORCE)

# OpenSSL is usually found from system/vcpkg, which is fine.

FetchContent_Declare(

libdatachannel

GIT_REPOSITORY https://github.com/paullouisageneau/libdatachannel.git

GIT_TAG v0.24.1

)

# This will download and ADD_SUBDIRECTORY libdatachannel

FetchContent_MakeAvailable(libdatachannel)

find_package(libyuv CONFIG REQUIRED)

if (MSVC)

set(WINSDK_VER "${CMAKE_VS_WINDOWS_TARGET_PLATFORM_VERSION}")

if (NOT WINSDK_VER)

message(FATAL_ERROR "CMAKE_VS_WINDOWS_TARGET_PLATFORM_VERSION is empty")

endif()

set(WINSDK_UM_LIB_DIR

"C:/Program Files (x86)/Windows Kits/10/Lib/${WINSDK_VER}/um/x64")

if (NOT EXISTS "${WINSDK_UM_LIB_DIR}/ncrypt.lib")

message(FATAL_ERROR "ncrypt.lib not found: ${WINSDK_UM_LIB_DIR}/ncrypt.lib")

endif()

set(FFMPEG_DEPENDENCY_ncrypt_RELEASE

"${WINSDK_UM_LIB_DIR}/ncrypt.lib"

CACHE FILEPATH "FFMPEG ncrypt release" FORCE)

set(FFMPEG_DEPENDENCY_ncrypt_DEBUG

"${WINSDK_UM_LIB_DIR}/ncrypt.lib"

CACHE FILEPATH "FFMPEG ncrypt debug" FORCE)

endif()

find_package(FFMPEG REQUIRED)

add_executable(${CMAKE_PROJECT_NAME}

main.cpp

)

target_include_directories(${CMAKE_PROJECT_NAME} PRIVATE

"${CMAKE_SOURCE_DIR}/include"

${FFMPEG_INCLUDE_DIRS}

)

target_link_directories(${CMAKE_PROJECT_NAME} PRIVATE

${FFMPEG_LIBRARY_DIRS}

)

# Include directories need to be explicitly added if not using find_package

target_include_directories(${CMAKE_PROJECT_NAME} PRIVATE

${libdatachannel_SOURCE_DIR}/include

${libdatachannel_BINARY_DIR}/include # For export.hpp

)

# Link against the target created by FetchContent

# Note: libdatachannel creates a target named 'datachannel' or 'datachannel-static'

# We should check which one exists or link against 'datachannel' if it's an alias.

# Also, we need to link dependencies manually if not propagated?

# But FetchContent usually handles it.

target_link_libraries(${CMAKE_PROJECT_NAME} PRIVATE datachannel)

target_link_libraries(${CMAKE_PROJECT_NAME} PRIVATE

CURL::libcurl

spdlog::spdlog

nlohmann_json::nlohmann_json

magic_enum::magic_enum

yuv

${FFMPEG_LIBRARIES}

gdi32

user32

)main.cpp

#include <iostream>

#include <memory>

#include <thread>

#include <chrono>

#include <string>

#include <vector>

#include <future>

#include <variant>

#include <atomic>

#include <regex>

// spdlog

#include <spdlog/spdlog.h>

#include <spdlog/fmt/bin_to_hex.h>

#include <spdlog/fmt/chrono.h>

#include <rtc/rtc.hpp>

#include <rtc/h264rtppacketizer.hpp>

#include <rtc/plihandler.hpp>

#include <rtc/rtcpnackresponder.hpp>

#include <rtc/rtcpsrreporter.hpp>

#include <rtc/rtppacketizationconfig.hpp>

#include <nlohmann/json.hpp>

// WebSocket 客户端

std::shared_ptr<rtc::WebSocket> ws;

// 点对点通信连接

std::shared_ptr<rtc::PeerConnection> pc;

// 数据通道

std::shared_ptr<rtc::DataChannel> dc;

int main()

{

// 初始化日志

rtc::InitLogger(rtc::LogLevel::Debug);

// 创建 rtc 配置

rtc::Configuration config;

// 添加 stun 服务器

config.iceServers.emplace_back("stun:stun.l.google.com:19302");

// 禁用自动协商, 手动处理 offer/answer

config.disableAutoNegotiation = true;

// 创建 peer connection (点对点通信)

pc = std::make_shared<rtc::PeerConnection>(config);

// 连接状态回调

pc->onStateChange([](rtc::PeerConnection::State state) {

spdlog::info("PeerConnection state: {}", (int)state);

});

// ICE candidate gathering 状态日志

pc->onGatheringStateChange([](rtc::PeerConnection::GatheringState state) {

spdlog::info("PeerConnection gathering state: {}", (int)state);

});

// 数据通道回调

pc->onDataChannel([](std::shared_ptr<rtc::DataChannel> channel) {

spdlog::info("DataChannel {} created", channel->label());

dc = channel;

// 数据通道打开回调

dc->onOpen([]() {

spdlog::info("DataChannel opened");

});

// 数据通道消息回调

dc->onMessage([](std::variant<rtc::binary, rtc::string> data) {

if (std::holds_alternative<rtc::string>(data))

{

spdlog::info("DataChannel message: {}", std::get<rtc::string>(data));

}

});

});

// 创建 WebSocket 客户端

ws = std::make_shared<rtc::WebSocket>();

// WebSocket 连接打开回调

ws->onOpen([]() {

spdlog::info("WebSocket connected");

});

// WebSocket 错误回调

ws->onError([](std::string s) {

spdlog::error("WebSocket error: {}", s);

});

// WebSocket 消息回调

ws->onMessage([](std::variant<rtc::binary, rtc::string> message) {

if (std::holds_alternative<rtc::string>(message))

{

try

{

auto msgStr = std::get<rtc::string>(message);

auto msg = nlohmann::json::parse(msgStr);

if (msg.contains("type"))

{

std::string type = msg["type"].get<std::string>();

std::string sdp = msg["sdp"].get<std::string>();

spdlog::info("[WS] Received SDP: {}", type);

pc->setRemoteDescription(rtc::Description(sdp, type));

if (type == "offer")

{

spdlog::info("[PC] Received offer, generating answer...");

pc->setLocalDescription(rtc::Description::Type::Answer);

}

}

else if (msg.contains("candidate"))

{

if (msg["candidate"].is_null() || msg["candidate"].get<std::string>().empty())

return;

std::string candidate = msg["candidate"].get<std::string>();

std::string sdpMid = msg["sdpMid"].get<std::string>();

pc->addRemoteCandidate(rtc::Candidate(candidate, sdpMid));

}

}

catch (const std::exception &e)

{

spdlog::error("[WS] Error parsing message: {}", e.what());

}

}

});

// 本地 SDP 生成后发回信令服务器。

pc->onLocalDescription([](rtc::Description desc) {

spdlog::info("Local Description Generated: {}", desc.typeString());

nlohmann::json msg;

msg["type"] = desc.typeString();

msg["sdp"] = std::string(desc);

if (ws->isOpen())

ws->send(msg.dump());

});

// 本地 ICE candidate 生成后发回信令服务器。

pc->onLocalCandidate([](rtc::Candidate cand) {

spdlog::info("Local Candidate Generated: {}", cand.candidate());

nlohmann::json msg;

msg["candidate"] = std::string(cand);

msg["sdpMid"] = cand.mid();

if (ws->isOpen())

ws->send(msg.dump());

});

// 连接 WebSocket 服务器

ws->open("ws://localhost:8000");

// 运行主循环

std::atomic<bool> running(true);

while (running)

{

std::this_thread::sleep_for(std::chrono::seconds(1));

}

return 0;

}

index.html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>WebRTC Desktop Stream</title>

<style>

body { font-family: monospace; padding: 20px; background: #1a1a1a; color: #ddd; }

.container { max-width: 1280px; margin: 0 auto; text-align: center; }

.status-bar { display: flex; justify-content: space-between; margin-bottom: 20px; }

.status { padding: 5px 10px; border-radius: 4px; font-weight: bold; background: #333; }

.status.connected { background-color: #28a745; color: #fff; }

.status.disconnected { background-color: #dc3545; color: #fff; }

#remoteVideo { width: 100%; max-width: 1280px; border: 2px solid #555; background: #000; }

.controls { margin: 20px 0; }

button { padding: 10px 20px; cursor: pointer; font-size: 16px; border-radius: 4px; border: none; margin: 0 10px; }

.btn-connect { background: #007bff; color: white; }

.btn-start { background: #28a745; color: white; }

button:disabled { background: #555; cursor: not-allowed; }

#logs { text-align: left; height: 200px; overflow-y: scroll; border: 1px solid #444; padding: 10px; background: #111; font-size: 12px; margin-top: 20px; }

#stats { text-align: left; background: #222; padding: 10px; border: 1px solid #444; margin-top: 20px; font-size: 12px; display: grid; grid-template-columns: 1fr 1fr 1fr; gap: 10px; }

.stat-item { padding: 5px; border-bottom: 1px solid #333; }

</style>

</head>

<body>

<div class="container">

<h1>WebRTC Desktop Stream (Debug Mode)</h1>

<div class="status-bar">

<div id="wsStatus" class="status disconnected">WS: Disconnected</div>

<div id="rtcStatus" class="status disconnected">WebRTC: Disconnected</div>

<div id="iceStatus" class="status disconnected">ICE: Disconnected</div>

</div>

<div class="controls">

<button id="connectBtn" class="btn-connect">1. Connect Signaling</button>

<button id="startBtn" class="btn-start" disabled>2. Start Stream</button>

</div>

<video id="remoteVideo" autoplay playsinline controls muted></video>

<div id="stats">

<div class="stat-item" id="stat-bytes">Bytes: 0</div>

<div class="stat-item" id="stat-packets">Packets: 0</div>

<div class="stat-item" id="stat-frames">Frames Decoded: 0</div>

<div class="stat-item" id="stat-keyframe">Key Frames: 0</div>

<div class="stat-item" id="stat-nack">NACKs: 0</div>

<div class="stat-item" id="stat-pli">PLIs: 0</div>

<div class="stat-item" id="stat-fps">FPS: 0</div>

<div class="stat-item" id="stat-jitter">Jitter: 0</div>

<div class="stat-item" id="stat-rtt">RTT: 0</div>

</div>

<h3>Logs:</h3>

<div id="logs"></div>

</div>

<script>

const signalingUrl = 'ws://localhost:8000';

let ws;

let pc;

let dc;

let statsInterval;

const config = {

iceServers: [{ urls: 'stun:stun.l.google.com:19302' }]

};

const log = (msg) => {

const logs = document.getElementById('logs');

const div = document.createElement('div');

div.textContent = `[${new Date().toLocaleTimeString()}] ${msg}`;

logs.appendChild(div);

logs.scrollTop = logs.scrollHeight;

console.log(msg);

};

const updateStats = async () => {

if (!pc) return;

const stats = await pc.getStats();

stats.forEach(report => {

if (report.type === 'inbound-rtp' && report.kind === 'video') {

document.getElementById('stat-bytes').textContent = `Bytes: ${(report.bytesReceived / 1024).toFixed(1)} KB`;

document.getElementById('stat-packets').textContent = `Packets: ${report.packetsReceived} (Lost: ${report.packetsLost})`;

document.getElementById('stat-frames').textContent = `Frames: ${report.framesDecoded} (Dropped: ${report.framesDropped})`;

document.getElementById('stat-keyframe').textContent = `Key Frames: ${report.keyFramesDecoded}`;

document.getElementById('stat-nack').textContent = `NACKs: ${report.nackCount}`;

document.getElementById('stat-pli').textContent = `PLIs: ${report.pliCount}`;

document.getElementById('stat-fps').textContent = `FPS: ${report.framesPerSecond || 0}`;

document.getElementById('stat-jitter').textContent = `Jitter: ${(report.jitter * 1000).toFixed(1)} ms`;

}

if (report.type === 'candidate-pair' && report.state === 'succeeded') {

document.getElementById('stat-rtt').textContent = `RTT: ${(report.currentRoundTripTime * 1000).toFixed(1)} ms`;

}

});

};

document.getElementById('connectBtn').onclick = () => {

ws = new WebSocket(signalingUrl);

ws.onopen = () => {

document.getElementById('wsStatus').className = 'status connected';

document.getElementById('wsStatus').textContent = 'WS: Connected';

document.getElementById('startBtn').disabled = false;

document.getElementById('connectBtn').disabled = true;

log('WebSocket connected');

};

ws.onmessage = async (event) => {

const msg = JSON.parse(event.data);

if (msg.sdp) {

log(`Received SDP: ${msg.type}`);

await pc.setRemoteDescription(new RTCSessionDescription(msg));

if (msg.type === 'offer') {

const answer = await pc.createAnswer();

await pc.setLocalDescription(answer);

ws.send(JSON.stringify(pc.localDescription));

log('Sent Answer');

}

} else if (msg.candidate) {

log('Received ICE Candidate');

try {

await pc.addIceCandidate(new RTCIceCandidate(msg));

} catch (e) {

log(`Error adding ICE candidate: ${e}`);

}

}

};

ws.onerror = (e) => log(`WebSocket error`);

ws.onclose = () => {

document.getElementById('wsStatus').className = 'status disconnected';

document.getElementById('wsStatus').textContent = 'WS: Disconnected';

log('WebSocket closed');

};

};

document.getElementById('startBtn').onclick = async () => {

if (pc) pc.close();

pc = new RTCPeerConnection(config);

pc.ontrack = (event) => {

log(`Received Track: ${event.track.kind} (${event.track.id})`);

if (event.track.kind === 'video') {

const videoEl = document.getElementById('remoteVideo');

const remoteStream = event.streams && event.streams[0] ? event.streams[0] : new MediaStream([event.track]);

videoEl.srcObject = remoteStream;

videoEl.onloadedmetadata = () => log(`Video Metadata Loaded: ${videoEl.videoWidth}x${videoEl.videoHeight}`);

videoEl.onresize = () => log(`Video Resized: ${videoEl.videoWidth}x${videoEl.videoHeight}`);

videoEl.onplay = () => log('Video Playing');

if (statsInterval) clearInterval(statsInterval);

statsInterval = setInterval(updateStats, 1000);

}

};

pc.onicecandidate = (event) => {

if (event.candidate) {

ws.send(JSON.stringify(event.candidate));

}

};

pc.onconnectionstatechange = () => {

const state = pc.connectionState;

document.getElementById('rtcStatus').textContent = `WebRTC: ${state}`;

document.getElementById('rtcStatus').className = state === 'connected' ? 'status connected' : 'status disconnected';

log(`WebRTC State: ${state}`);

};

pc.oniceconnectionstatechange = () => {

const state = pc.iceConnectionState;

document.getElementById('iceStatus').textContent = `ICE: ${state}`;

document.getElementById('iceStatus').className = (state === 'connected' || state === 'completed') ? 'status connected' : 'status disconnected';

log(`ICE State: ${state}`);

};

pc.onsignalingstatechange = () => {

log(`Signaling State: ${pc.signalingState}`);

};

// Create Data Channel

dc = pc.createDataChannel("control");

dc.onopen = () => log('DataChannel Open');

dc.onmessage = (e) => log(`DC Msg: ${e.data}`);

// Add Transceiver to receive video

pc.addTransceiver('video', { direction: 'recvonly' });

const offer = await pc.createOffer();

await pc.setLocalDescription(offer);

ws.send(JSON.stringify(pc.localDescription));

log('Sent Offer');

};

</script>

</body>

</html>

© 版权声明

文章版权归作者所有,未经允许请勿转载。

THE END

暂无评论内容